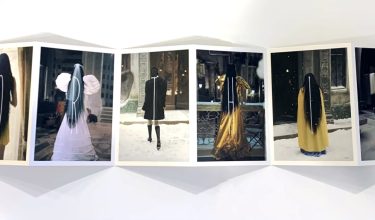

Kodak brings cinematic magic to life with 'Poor Things' promotional postcards

January 11, 2024

GUA Conference reveals next milestones for the KODAK PRINERGY Workflow Platform

Lively exchange at the 15th European GUA Conference

December 07, 2023

Kodak inkjet application versatility: Do more with less

November 15, 2023

Press Releases

View the latest news from Kodak

Contact Us

Find contact information for our products and services